Who's onlineThere are currently 0 users and 1 guest online.

User loginBook navigationNavigationLive Traffic MapNew Publications

|

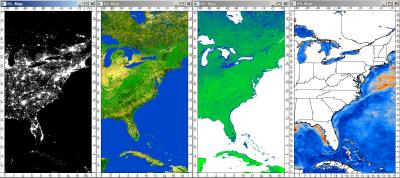

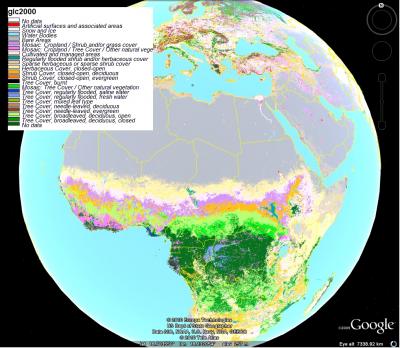

Updated Global data sets (worldmaps at 5km resolution)I have just updated the small repository of publicly available data sets of interest for global modeling/mapping that I have launched about a year ago. This now contains 68 layers at resolution of 0.05 arcdegrees and with a complete world coverage (it used to be 65S-65N only). The data is available for download from my wiki project. Each layer comes with a raster description file *.rdc, which typically has the same name as the attached layer (description of the fields is available in the README file). The raster description file includes also a link to an R script that (should) show all processing steps from download to export of maps (I advise you to run the scripts step by step because the data sets are usually Huge). If you want to read more about what is all available on this repository (and outside), please read the complete article. You can also browse a gallery of worldmaps from the wikimedia commons. Note that some maps have limited geographical coverage (e.g. PCEVI, GLC2000), which usually means that the data for polar regions is missing. If you think that I have maybe missed some important (publicly available) layers, please let me know. I am sure that there is much more what is available (on and off the web), but I would at least try to be representative. My next project will be to prepare the 1 km data (about 70% of maps listed are available also in this resolution) and put them into some database format such as WKT raster or rasdaman. This way anybody will be able to overlay point, line, polygon features and fetch only the results of queries from the server. But it looks as this will take more time than I have initially anticipated. ARE THESE MAPS JUST COPIES?Many of the layers listed (cca 20-30%) are simply resampled and reformatted maps that are already available from the original providers (e.g. pcclim, GLC2000, himpact etc.). The great majority of maps are basically original layers that you will not be able to find elsewhere. For example, PCEVI1 is the 1st Principal Component of the total time-series of monthly MODIS EVI bands (this image basically shows the average long-term 'biomass' in the world). If you wish to cite some of the maps I have prepared, then you should refer to the chapter #4 in my book, otherwise I advise you to refer to the original data providers. Each *.rdc file contains information about the data source, including the link to where you can find the source data and peer-reviewed publication that describes the dataset. Personally, I find it frustrating that there are several global mapping projects in the world that overlap (for example, there are at least four global land cover maps!). In some cases I solve this problem by simply taking the average between the maps (e.g. globedem is a an average between two maps), but the categorical maps cannot be averaged as easy. My second frustration are the license and copyright problems. Some data producers (usually the USA mapping agencies) have a very clear policy and even support copying and distribution of the maps they produce (provided that the source is acknowledged); others (e.g. himpacts) are not really clear. I am only interested in processing and organizing the publicly available data. MEMORY LIMIT PROBLEMSGoing from 10 km to 5 km resolution brought me to many technical headaches. Just to download the input data takes about one week (the input data I used to generate the 68 layers, now of size 350MB, is about 500GB!). Each layer now has cca 26M pixels, which will obviously lead to many memory and computational limit problems. For example, I doubt it that you will be able to load these data into R on a standard PC (2-4GB RAM) or visualize the maps using spplot. I also tried to derive some DEM parameters such as SAGA TWI, height above channel channels etc, but the maps are simply too big (processing takes >24 hours), so it is very likely that you will also face memory limit and computational problems on your computers. PS: I used a Dell 2.8GHz with dual processor and 64Bit Window XP OS with 4GB of RAM to run processing, and this configuration was already on the edge. I am really thankful to Frank Warmerdam and colleagues for providing excellent utilities which I heavily used to prepare the maps. I actually did run a small comparison between the gdalwarp utility and Erdas Imagine and ArcGIS and discovered that gdal utilities are (1) faster and (2) more easy to script (+ you have a support for proj4 strings and largest family of GIS data formats). The second on my list was SAGA GIS, which can also crunch Huge data (up to 2GB) and has a large library of GIS operations. I highly recommend these two programs and would support further development very much. In some cases, I could not find any functionality for the analysis in GDAL utilities and SAGA, so I used ILWIS GIS (e.g. to run principle component analysis and to extract density of lines and point features). Unfortunately linking of R and ILWIS is not as smooth, so I often finished running part of the analysis in ILWIS separately. This is just an important information for the people that will focus only on using the R lineage scripts. I am looking forward to your feedback and further comments.

|

Testimonials"Just a short word of congratulation about the open-source book project you launched yesterday; this is exactly the idea I have of the word ‘research’ (especially as a free-software advocate myself!), will try to help as much as possible." Poll |

Recent comments

5 years 12 weeks ago

5 years 30 weeks ago

5 years 38 weeks ago

5 years 51 weeks ago